What will you learn?

Introduction

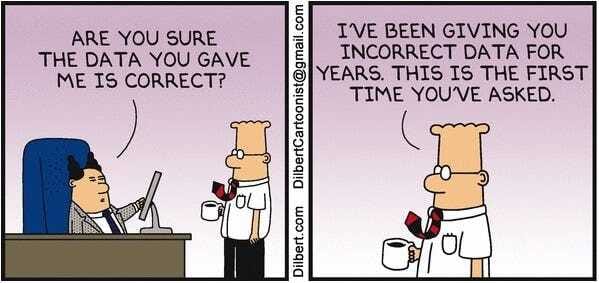

Organisations depend on data to make crucial business decisions in our new data-driven world. However, as data volumes soar and systems become more intricate, ensuring data quality, reliability, and performance across different pipelines has become quite a challenge. This is where principles of data Observability step in, offering a proactive approach to data management. Its goals are to provide businesses and consumers with real-time insights and a proactive ability to spot issues within their data ecosystem, enabling them to stay ahead of the game and contribute to the overall customer experience.

What is Data Observability?

Data Observability provides full visibility into the health of your data and data systems, allowing you to be the first to know when data is wrong, what broke, and how to fix it. It's a comprehensive approach that enables tracking of data in real-time as it moves along the data pipeline, providing transparency into any issues that may arise.

The concept of data Observability has evolved significantly since its inception in 2019. Today, solutions in this category feature machine-learning anomaly detection, accelerated root cause analysis capabilities, and end-to-end data lineage. This approach results in healthier data pipelines, increased team productivity, enhanced data management practices, and, ultimately, higher customer satisfaction.

The Five Pillars of Data Observability

Data Observability stands on five key pillars:

Freshness: How up-to-date is your data? Fresh data means timely insights.

Quality: Is your data accurate and consistent? High-quality data builds trust.

Volume: Are you handling the right amount of data? Monitoring volume helps manage storage and detect anomalies.

Schema: Is your data structured correctly? A proper schema ensures data is organized and usable.

Lineage: Where did your data come from, and how has it changed? Understanding lineage helps trace errors back to their source.

Together, these components provide valuable insight into the quality and reliability of your data.

Sponsored

MGMT Playbook

Practical management insights straight to your inbox every Wednesday.

Observability vs. Data Observability: Key Differences

While the terms "Observability" and "Data Observability" might seem interchangeable, they serve distinct purposes in the modern enterprise. Let's explore the key differences:

1. Historical Context:

Observability has a long history, dating back to Hungarian-American engineer Rudolf E. Kálmán in the 1960s. Based on control theory, Observability refers to the ability to infer a system's internal states from its external outputs.

Data Observability is a relatively new concept that has become critical in just the past several years, driven by the increasing importance of data in modern enterprises.

2. Focus and Scope:

Observability primarily focuses on end-to-end monitoring and managing a system's performance and health. It's often associated with technical terms such as Logs, Trace, etc.

Data Observability concentrates specifically on the health of analytical data pipelines and the quality of data flowing through them. It deals with visibility, control, and optimization of distributed, diverse data infrastructures.

3. Target Users:

Observability serves IT managers, DevOps teams, and site reliability engineers (SREs). It's used by those overseeing general system performance and application health.

Data Observability caters to data engineers, data architects, chief data officers, data scientists, and analytics officers. It's designed for teams directly responsible for managing and optimizing data pipelines.

4. Primary Goals:

Observability aims to provide telemetry on monolithic and microservice applications, often focusing on less data-intensive and distributed systems.

Data Observability strives to ensure the reliability of data pipelines, build trust in data products, and meet demanding Service Level Agreements (SLAs) for data-driven business needs.

5. Data Types and Sources:

Observability deals with metrics, events, logs, and traces essential for monitoring and security operations. This data is often a byproduct of other work and can be voluminous.

Data Observability focuses on transactional data and the complex pipelines moving data from operational databases to analytical data warehouses.

6. Tooling and Capabilities:

Observability utilises processes and monitoring concepts and tooling driven by an event-type principle; this tooling is generally not (although improving greatly) well-equipped to handle the complexity of modern distributed data infrastructures.

Data Observability requires specialized tools that provide holistic views of entire data ecosystems, offering predictive analysis of data pipeline issues and automation capabilities.

Understanding these differences is crucial for organisations to implement the right Observability strategies for their specific needs and to maximize the value of their data and systems.

Why is Data Observability Important?

Data Observability is crucial because it drastically reduces the impact of data quality issues and empowers data teams to programmatically scale robust end-to-end quality practices across the breadth of their data environment. As organisations unlock additional value from their data with analytical, machine learning, and AI use cases, data downtime will become more costly, justifying the direction of additional resources towards improving data quality.

The primary benefits of data Observability include:

Improved Data Trust: organisations can foster greater trust in their data products by ensuring data quality and reliability.

Immediate Time-to-Value: Automated tooling like ML monitors and lineage provides quick insights and solutions.

More Focused and Efficient Data Teams: Data teams can spend less time firefighting and more time on strategic initiatives.

Reduced Impact of Data Quality Issues: Proactive detection and resolution of issues minimize the impact on business operations.

Scalable Data Quality: Maintain data quality at any scale, even as your data ecosystem grows and becomes more complex.

How Data Observability Works

Data Observability uses various tools and techniques to keep your data in check:

Automated Monitoring: Tools continuously watch your data for signs of trouble.

Machine Learning: AI helps detect anomalies that might be missed by human eyes.

Root Cause Analysis: When something goes wrong, these tools help you figure out why.

Data Lineage Tracking: This shows you the journey of your data, making it easier to pinpoint where things went off track.

Real-World Applications of Data Observability

Imagine you're running an e-commerce store. Your sales data, customer analytics, and inventory levels must be spot-on to make informed decisions. Data Observability ensures that all these data points are accurate and up-to-date, helping you avoid stockouts, optimize marketing campaigns, and improve customer satisfaction.

In another scenario, consider a financial institution using data for risk assessment and fraud detection. Data Observability can ensure that the data used for these critical processes is always accurate, fresh, and compliant with regulatory requirements.

Implementing Data Observability

To successfully implement observable data, you'll need three things:

1. A keen understanding of your data infrastructure includes knowing where data is sourced from, how it moves along the pipeline, and where it resides.

2. Appropriate tools and instrumentation: Select tools that can easily integrate with your existing data infrastructure and provide comprehensive monitoring capabilities. Consider factors such as scalability, integration capabilities, and ease of use.

3. The right monitoring systems: These should flag data inconsistencies and provide real-time alerts when issues arise.

The Future of Data Observability

As we move into 2024 and beyond, data Observability is becoming increasingly essential, especially with the rise of AI and machine learning. Organisations that invest in robust data Observability practices will be better positioned to leverage their data assets, make more informed decisions, and stay ahead in an increasingly data-driven world.

Conclusion: The Synergy of Observability and Data Observability

As we've explored the world of data Observability and its crucial role in modern data management, it's important to recognize that true success in today's complex IT environments requires a holistic approach. This means embracing both traditional Observability and data Observability as complementary practices.

Observability, with its focus on system health, application performance, and infrastructure monitoring, provides the foundation for understanding how your systems are functioning. It gives you insights into the "how" and "what" of your technology stack. On the other hand, data Observability zeroes in on the quality, reliability, and performance of your data itself, answering the "why" behind your data-driven decisions. By implementing both Observability and data Observability, organisations can achieve:

Comprehensive Visibility: You gain a 360-degree view of both your systems and your data, leaving no blind spots in your IT and data ecosystems.

Enhanced Problem-Solving: When issues arise, you can quickly determine whether the root cause lies in your infrastructure, applications, or data pipelines.

Improved Data-Driven Decision-Making: With confidence in both your systems and data, you can make more informed and reliable business decisions.

Optimized Performance: By fine-tuning both your IT infrastructure and data processes, you can achieve peak performance across all aspects of your digital operations.

Increased Innovation: With a solid foundation of Observability across systems and data, teams can focus on innovation rather than firefighting.

Remember, in today's data-driven world, your systems and your data are intrinsically linked. A problem in one area can quickly cascade into the other. By embracing both Observability and data Observability, you create a robust framework that ensures the health, reliability, and performance of your entire digital ecosystem.

As you embark on your Observability journey, consider how you can integrate both system and data Observability practices into your organization. This holistic approach will help you navigate the complexities of modern IT environments and unlock your data's full potential, driving your organization forward in this data-driven era.

Ultimately, the synergy between Observability and Data Observability is your key to unlocking true digital resilience and success. It's time to take control of both your systems and your data, ensuring their quality, reliability, and performance to drive your organization forward in this interconnected, data-driven world.

Until next time, happy observing!